Jenkins is an open-source automation tool written in Java with plugins built for continuous integration purposes. It helps you build and test your software projects continuously, making it easier for developers to integrate project changes and for users to obtain a fresh build. This tool also allows you to continuously deliver your software by integrating with a large number of testing and deployment technologies.

With Jenkins, organizations can accelerate the software development process through automation. It integrates development life-cycle processes of all kinds, i.e., build, document, test, package, stage, deploy, static analysis, and much more.

Jenkins achieves continuous integration with the help of plugins that allow the integration of Various DevOps stages. In case you want to integrate a particular tool, you need to install the plugins for that tool first. For example, Git, Maven 2 project, Amazon EC2, HTML publisher, etc.

Jenkins Architecture

Of course, there are instances when a single Jenkins server is not enough to meet certain requirements. For example:

- Sometimes you need several different environments to test builds. A single Jenkins server cannot do this.

- A single Jenkins server cannot handle new large and heavy projects on a regular basis.

Jenkins Distributed Architecture is able to address the above-stated needs.

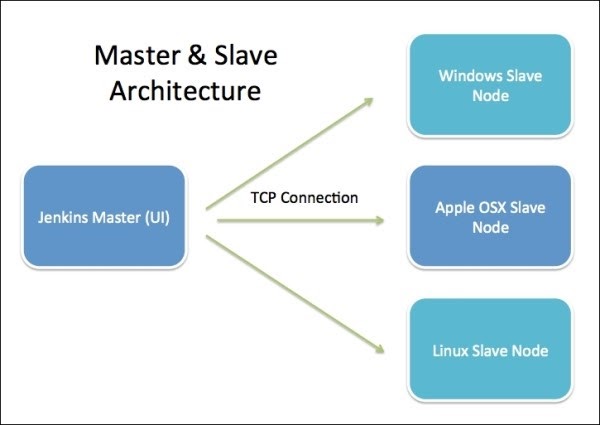

Jenkins Distributed Architecture

JDA uses a Master-Slave architecture to manage distributed builds. In this architecture, Master and Slave communicate through TCP/IP protocol.

Jenkins Master

Main Jenkins server is the Master and its job is to handle:

- Scheduling build jobs

- Dispatching builds to the slaves for the actual execution

- Monitoring the slaves (possibly taking them online and offline as required)

- Recording and presenting the build results

- Executing build jobs directly

Jenkins Slave

A Slave is a Java executable that runs on a remote machine. The following are the characteristics of a Jenkins Slave:

- It hears requests from the Jenkins Master instance.

- A Slave can run on a variety of operating systems.

- The job of a Slave is to “obey” the Master, that is, execute build jobs dispatched by it.

- You can either configure a project to always run on a particular (type of) Slave machine or simply let Jenkins pick the next available Slave.

What’s more, you can use Jenkins for testing in different environments like Ubuntu, MAC, Windows, etc.

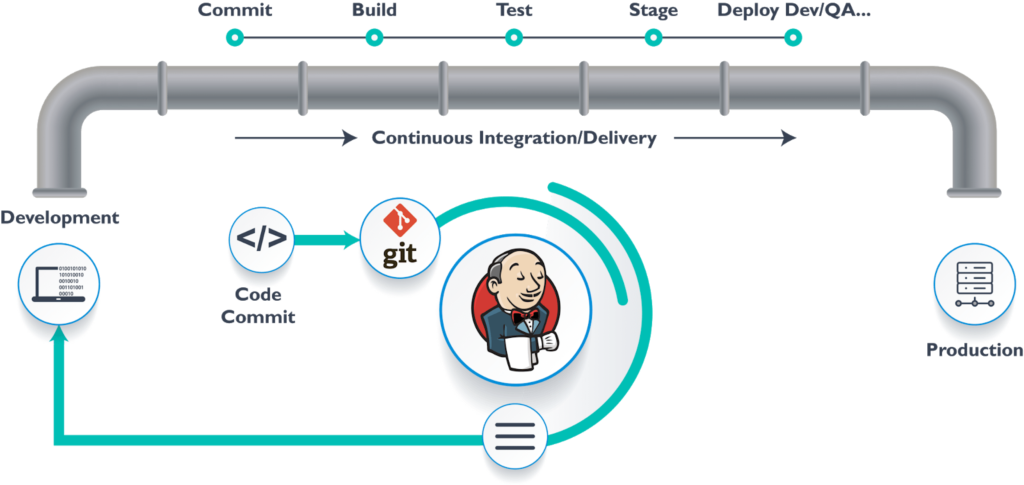

Usually, in the interaction between Master and Slave the following function order is performed:

- Jenkins checks the Git repository at periodic intervals for any changes made in the source code.

- Each build requires a different testing environment which is not possible for a single Jenkins server. In order to perform testing in different environments, Jenkins uses various Slaves as shown in the photo.

- Jenkins Master requests these Slaves to perform testing and to generate test reports.

Jenkins Pipeline

It is a collection of jobs that brings the software from version control into the hands of the end-users by using automation tools. You can use Jenkins Pipeline to incorporate continuous delivery in your software development workflow.

Over the years, there have been multiple Jenkins pipeline releases including Jenkins Build flow, Jenkins Build Pipeline plugin, Jenkins Workflow, etc.

What are the key features of plugins?

- They represent multiple Jenkins jobs as one whole workflow in the form of a pipeline.

- These pipelines are a collection of Jenkins jobs that trigger each other in a specified sequence.

Build Pipeline

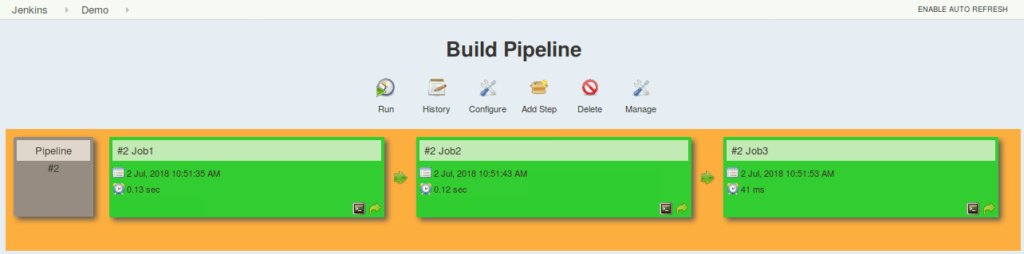

So, let’s suppose that you need to build, test, and deploy a small application with Jenkins. You will need three jobs in order to perform this process.

So, job1 would be for the build, job2 for performing tests, and job3 for deployment. You can use Jenkins Build Pipeline plugin to perform this task. After creating three jobs and chaining them in a sequence, the build plugin will run them as a pipeline.

This image shows a view of all the 3 jobs that run concurrently in the pipeline.

This approach is effective in deploying small applications. But, what happens when there are complex pipelines with several processes (i.e., build, test, unit test, integration test, pre-deploy, deploy, monitor, etc.) running 100s of jobs?

However, the maintenance cost for such a complex pipeline is huge and increases with the number of processes. It also becomes tedious to build and manage such a vast number of jobs. Jenkins Pipeline Project can overcome this issue.

Jenkins Pipeline Project

The key feature of this pipeline is to define the entire deployment flow through code. So, what does this mean? It means that all the standard jobs defined by Jenkins are manually written as one whole script.

Moreover, you can store them in a version control system. It basically follows the ‘pipeline as code’ discipline. Instead of building several jobs for each phase, you can now code the entire workflow and put it in a JenkinsFile.

The Use of Jenkins Pipeline

We’re all aware that Jenkins has proven to be an expert in implementing continuous integration, testing, and deployment to produce good quality software.

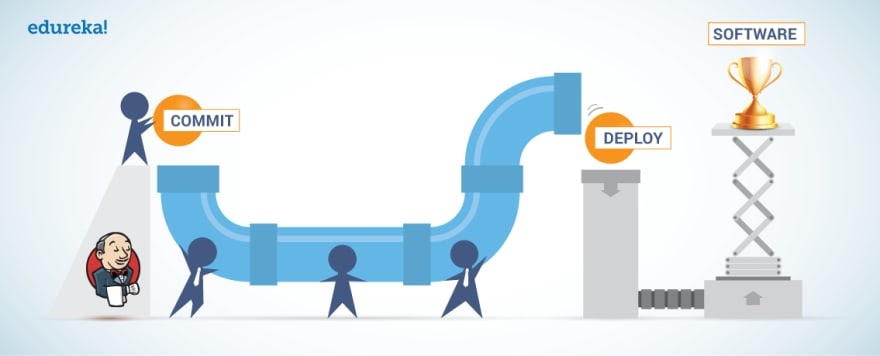

When it comes to continuous delivery, Jenkins uses a feature called Jenkins Pipeline. In order to understand why its purpose, we have to understand what continuous delivery is and why it is important.

In other words, continuous delivery is the capability to release software at all times. It is a practice which ensures that the software is always in a production-ready state. What does this mean? Every time there is a change to the code or the infrastructure, the software team must build and test it quickly using various automation tools. Afterward, the build is ready for production.

So, by speeding up the delivery process, the development team will get more time to implement any required feedback. The process of getting the software from the build to the production state at a faster rate is carried out by implementing continuous integration and continuous delivery.

Continuous delivery ensures that the software is built, tested, and released more frequently. Also, it reduces the cost, time, and risk of incremental software releases.

Types of Jenkins Pipeline

The Jenkins pipeline is written based on two syntaxes:

- Declarative pipeline syntax

- Scripted pipeline syntax

A declarative pipeline is a relatively new feature that supports the pipeline as a code concept. It makes the pipeline code easier to read and write. This code is written in a JenkinsFile which can be checked into a source control management system such as Git.

On the other hand, a scripted pipeline is a traditional way of writing the code. In this pipeline, the JenkinsFile is written on the Jenkins UI instance. Though both these pipelines are based on the groovy DSL, the scripted pipeline uses stricter groovy based syntaxes since it was the first pipeline built on the groovy foundation.

However, this Groovy script was not typically desirable to all the users. So, a declarative pipeline offered a simpler Groovy syntax with more options. It is defined within a block labeled ‘pipeline’ whereas the scripted pipeline is defined within a ‘node’.

JenkinsFile

JenkinsFile is a text file that stores the entire workflow as code. It can be checked into an SCM on your local system. How is this advantageous? This enables the developers to access, edit, and check the code at all times.

The file is written using the Groovy DSL. You can create it through a text/groovy editor or through the configuration page on the Jenkins instance.

Pipeline Concepts

Pipeline

This is a user-defined block that contains all the processes such as build, test, deploy, etc. It is a collection of all the stages in a JenkinsFile. All the stages and steps are defined within this block. So, it is the key block for a declarative pipeline syntax.

Node

A node is a machine that executes an entire workflow. It is a key part of the scripted pipeline syntax.

Mandatory Sections

There are various mandatory sections that are common to both the declarative and scripted pipelines. These include an agent, stages, and steps that must be defined within the pipeline.

Agent

An agent is a directive that can run multiple builds with only one instance of Jenkins. This feature helps to distribute the workload to different agents as well as execute several projects within a single Jenkins instance. In addition, it instructs Jenkins to allocate an executor for the builds.

You can specify a single agent for an entire pipeline or allot specific agents to execute each stage within a pipeline. Few of the parameters used with agents are the following:

- Any

It runs the pipeline/stage on any available agent.

- None

You can apply this parameter to the root of the pipeline. It indicates that there is no global agent for the entire pipeline. Also, each stage must specify its own agent.

- Label

Executes the pipeline/stage on the labeled agent.

- Docker

This parameter uses a Docker container as an execution environment for the pipeline or a specific stage.

Stages

This block contains all the work that needs to be carried out. The work is specified in the form of stages. There can be more than one stage within this directive. Each stage performs a specific task.

Steps

You can define a series of steps within a stage block. These steps will be carried out in sequence in order to execute a stage. Naturally, there has to be at least one step within a step directive.

Advantages of Jenkins Pipeline

- It models simple-to-complex pipelines as code by using Groovy DSL (Domain Specific Language)

- The code is stored in a text file called the JenkinsFile which can be checked into an SCM (Source Code Management)

- Improves user interface by incorporating user input within the pipeline

- It is durable in terms of the unplanned restart of the Jenkins master

- JP can restart from saved checkpoints

- It supports complex pipelines by incorporating conditional loops, fork or join operations and allowing parallel tasks

- JP can integrate with several other plugins

Wrap Up

In case you need a reliable and free automation tool, Jenkins won’t let you down. So, give it a try and see for yourself.

Sources